2025

RDMM: Fine-Tuned LLM Models for On-Device Robotic Decision Making with Enhanced Contextual Awareness in Specific Domains

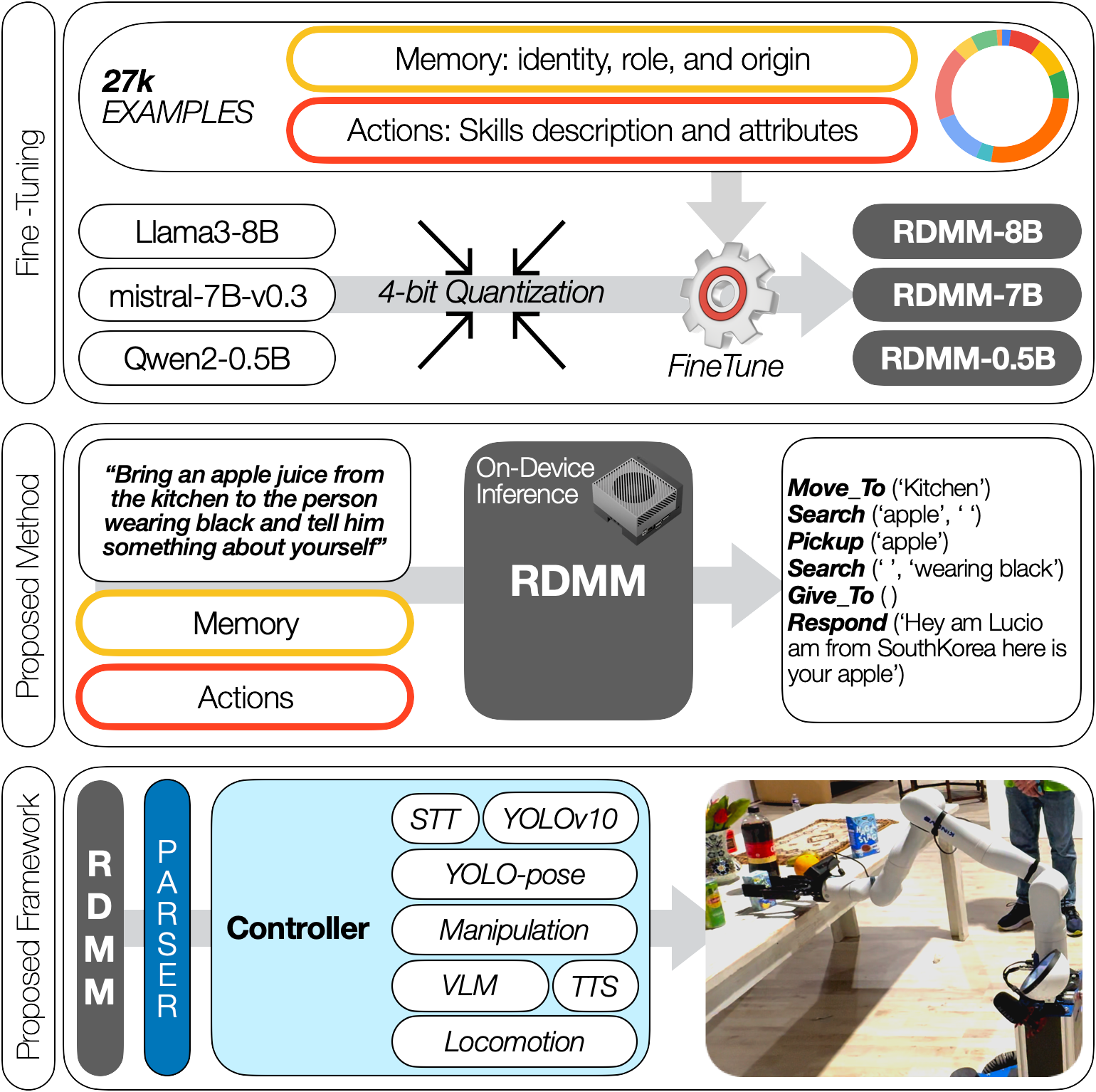

RDMM studies how smaller fine-tuned language models can stay practical for embodied systems by running locally, reasoning over domain-specific household tasks, and incorporating the robot's own context and capabilities.

Shady Nasrat, Minseong Jo, Myungsu Kim, Seonil Lee, Jiho Lee, Yeoncheol Jang, Seung-Joon Yi

Table of contents

Introduction

In the rapidly advancing field of robotics and artificial intelligence, the imperative to augment the decision-making capabilities of autonomous systems has been a paramount concern. These models can enhance decision-making, interaction, and planning through their linguistic and contextual understanding abilities. Nevertheless, the direct deployment of large language models in domain-specific robotic tasks faces significant challenges. These key challenges include first, insufficient ability to integrate and leverage personal contextual knowledge about the agent itself, such as its background, capabilities, and specific skills. Second, deployment in real-time on-device settings necessitates efficient inference mechanisms, which can be limited by the computational complexity of large language models.

Recently, there are many methods for solving the grounding problems of LLMs in robotics. PaLM-E [1] generates control sentences according to multi-modal data. RT-X [2] directly infer instructions based on languages and images. ChatGPT for Robotics [3] needs the declaration of APIs for reasoning the actions of tasks. SayCan [4] selects most suitable actions according to environmental information. VoxPoser [5] converts the observation space into a 3D value maps for generating trajectories. While existing methods can achieve domain-specific planning and handle some partial disturbances, a key limitation is their inability to incorporate the agent’s own knowledge, such as personal background information, capabilities, and skills. This personal contextual knowledge is crucial for well-reasoned question-answering to support effective planning processes.

For instance, a domestic robot assistant could be given a simple task such as delivering an apple to the individual wearing a black t-shirt, and then engaging in a conversation about its recent achievements or favorite color. Existing methods would face difficulties in executing this request, as the employed large language models lack access to the robot’s personal knowledge. In contrast, our RDMM framework enables the agent to retrieve and utilize its own information, including its identity, role, and origin, to formulate an appropriate and informative response. This could involve statements like ’I am Lucio, a household robot assistant. How may I assist you?’ or ’Hello, I am Lucio, and I originate from South Korea’. Furthermore, a straightforward task that would challenge other methods is ’What can you do?’, which necessitates the robot’s understanding of its own capabilities. Our RDMM framework would provide an informative response highlighting its abilities, such as: ’I can help you with tasks such as moving to a location, searching for objects or people, picking up objects, placing them on a surface, and answering questions.’.

This paper focuses on developing RDMM models by fine-tuning large language models to acquire advanced planning capabilities. First, the study constructed a comprehensive dataset centered on the tasks and rules of the RoboCup@Home competition. Building upon this foundation, the dataset was further expanded to incorporate the agents’ personal knowledge and information regarding their own capabilities and skills. This approach empowers the large language models to not only plan effectively for the given tasks, but also engage in meaningful interactions by providing insightful responses to inquiries about their personal details and abilities, such as their identity, role and background.

This paper makes the following key contributions:

A local framework that leverages RDMM models to enhance robotic decision-making by integrating Agent-specific knowledge and domain-specific knowledge.

A comparative evaluation against GPT-4o and base LLMs, demonstrating RDMM’s superior planning accuracy and real-time on-device inference capabilities.

Real-world deployment at the RoboCup@Home competition, demonstrating its ability to handle complex robotic tasks within a household environment.

A new publicly available dataset (27k planning instances, 1.3k annotated images) to advance robotic decision-making research.

Method

Dataset Creation

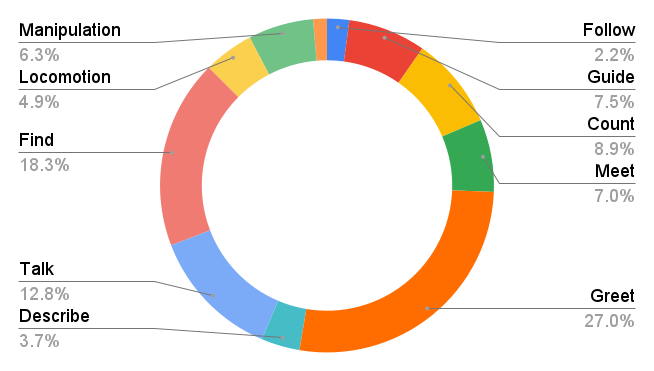

To create a comprehensive dataset for household robots, we drew inspiration from the RoboCup@Home competition tasks, ensuring it covers a diverse set of skills essential for domestic activities. The dataset is structured into three categories: action-oriented tasks, contextual memory retrieval tasks, and hybrid tasks—each designed to enhance the robot’s operational efficiency and decision-making capabilities in real-world environments.

The action-oriented section trains the robot to perform fundamental tasks such as manipulation, navigation, object searching, description, and counting. This ensures the model can generate effective strategies for practical robotic applications.

The contextual memory retrieval section enables the robot to recall and utilize its stored knowledge, improving its ability to understand its capabilities, prior interactions, and personalized information. This allows for more adaptive and human-like interactions, such as guiding, following, and engaging with individuals based on contextual cues.

The hybrid category includes tasks that require both action execution and memory recall, such as retrieving an item and engaging in a conversation that requires recalling relevant details from past interactions.

The dataset consists of 27,514 manually annotated examples, each structured as textual input-output pairs focused on household tasks. It is organized into 42 scenario-based segments, with each scenario categorized under distinct task types, as illustrated in Fig. . The dataset encompasses 21 distinct skills, detailed in Table .

To further enhance the robot’s decision-making and adaptability, system messages provide action descriptions, usage guidelines, and access to stored knowledge, allowing for more informed responses and task execution. This dataset serves as a benchmark for evaluating our models and a valuable resource for training robots in household scenarios. By integrating both action-based and memory-informed decision-making, it enables robots to respond more effectively to task-specific requirements and contextual cues, improving overall interaction and performance in real-world applications.

| Actions | Description |

|---|---|

| Respond(request) | Respond to user |

| Move_To(location) | Move to a location |

| Pour_In(object) | Pour object into a container |

| Search_Object(name\(^o\), desc.\(^*\)) | Search for an object |

| Search_Person(name\(^o\), desc.\(^*\)) | Search for a person |

| Pickup() | Pickup an object |

| Place_On(placement) | Place picked up object on placement |

| Place_Next(object) | Place picked up object next to object |

| Give_To() | Give an object to user |

| Open(object) | Open a door |

| Close(object) | Close a door |

| Vision_Ask(Question\(^*\)) | Ask VLM and return in Answer() |

| Answer() | Retrieve answer |

| Follow() | Follow a person |

| New_Request() | Take a new request |

| Count_Person(desc.\(^*\)) | Count people and return in Answer() |

| Count_Object(name\(^o\), desc.\(^*\)) | Count object and return in Answer() |

| Ask_Name() | Ask name and return in Answer() |

| What_Time() | Retrieve time |

| What_Day() | Retrieve date |

| What_Tomorrow() | Retrieve tomorrow date |

| \(^*\): Arguments is processed using VLM, \(^o\): Arguments is processed using YOLO | |

Quantization and Fine-Tuning Details

Llama3-8B [17], Mistral-7B-v0.3 [18], and Qwen2-0.5B [19] was selected as base models for fine-tuning due to their optimal balance of size and performance for Jetson Edge devices. To enhance inference efficiency, GPTQ [20] method is applied for quantization, which compresses the model to 4-bit precision while preserving performance. We also utilize QLoRA[21], freezing the pre-quantized model and train only a new subset of parameters act as an adapter. Training conducted with a learning rate of 2.5e-5 and capped at 1000 steps, while targeting specific layers such as q_proj, k_proj, v_proj, o_proj, gate_proj, up_proj and down_proj. QLoRA combines the 4-bit NormalFloat quantization, Double Quantization, and Low-Rank Adapters (LoRA)[22] to achieve efficient 4-bit quantization. For a single linear layer in the quantized base model with a single LoRA adapter, QLoRA is defined as:

\[\begin{aligned} \textbf{Y}^{BF16} = \textbf{X}^{BF16} \ast doubleDeq(c_{1}^{FP32},c_{2}^{k-bit},\textbf{W}^{NF4}) \\ +\textbf{X}^{BF16}\textbf{L}_{1}^{BF16}\textbf{L}_{2}^{BF16} \end{aligned}\]

where \(doubleDeq\) is the double de-quantization process:

\[\begin{aligned} &doubleDeq(c_{1}^{FP32},c_{2}^{k-bit},\textbf{W}^{k-bit}) \\ &= dequant(dequant(c_{1}^{FP32},c_{2}^{k-bit}),\textbf{W}^{4-bit})\\ &= \textbf{W}^{FB16} \end{aligned}\]

QLoRA uses NF4 for the weights (W) and FP8 for the quantization constants (\(c_2\)). The block-size is set to 64 for W for higher precision and 256 for \(c_2\) to conserve memory. During the backward pass, only the gradients with respect to the LoRA adapter weights (\(\frac{\delta E}{\delta \textbf{L}_i}\)) are computed, not for the 4-bit weights (\(\frac{\delta E}{\delta \textbf{W}}\)). However, computing (\(\frac{\delta E}{\delta \textbf{L}_i}\)) involves calculating \(\frac{\delta \textbf{X}}{\delta \textbf{W}}\), which requires dequantizing the storage \(\textbf{W}^{NF_4}\) to the computation data type \(\textbf{W}^{BF16}\). In summary, QLoRA uses 4-bit NormalFloat as the storage data type and 16-bit BrainFloat as the computation data type. The storage data type is dequantized to the computation data type for the forward and backward passes, but gradients are only computed for the LoRA parameters in 16-bit precision. Training time was 24 minutes for RDMM-8B, 11 minutes for RDMM-7B, and 5 minutes for RDMM-0.5B on a single NVIDIA RTX 4090 GPU.

Framework Overview

Parser & Controller

The parser component of our framework is responsible for translating the RDMM-generated plans into actionable commands that the robot can execute. The controller then interprets these commands and interacts with various models, such as VLMs, YOLO, STT and TTS models, to perform specific tasks.

Vision Language Model

Visual perception models play a vital role in enabling robots to understand and interact with their surroundings. We utilize a 4-bit quantized Vision-Language Model (VLM)[23] to process contextual cues and extract detailed visual information. This model accurately describes people, objects, and scenes, serving as a reliable source of visual intelligence. For instance, the VLM can determine whether a person is wearing shoes or holding a cup. In Fig. , within the actions + contextual memory retrieval example, the generated plan includes the action Search_Person(’ ’, ’wearing black t-shirt’), where the VLM processes the second argument to interpret and identify the described individual.

YOLO Model

For our real-time object detection algorithms supporting robotic manipulation tasks, the first priority is accurately identifying objects in the environment. To achieve this, we trained a YOLOv10L model on an annotated dataset containing 1.3k images sourced from the RoboCup@Home competition. In Fig. , within the actions example, the generated plan includes the action Search_Object(’cereal’, ’ ’), where the first argument is processed by YOLO to detect object location. Additionally, for human detection and pose estimation, we utilize the YOLOv8-pose model.

Automatic Speech Recognition

We use Whisper for speech recognition, transcribing audio into text and providing feedback to indicate the robot is listening. For natural responses, we use Seliro-TTS for human-like text-to-speech.

Experiments

We evaluated the accuracy, on-device compatibility and inference speed of our RDMM models, comparing them to baseline models, GPT-4o-mini and GPT-4o. Additionally, we tested our model’s real-world performance during the RoboCup@Home competition.

Models Planning Accuracy

The accuracy comparison graph in Fig. compares the accuracy of several models across various tasks. It highlights the strong performance of the RDMM models (RDMM-8B, RDMM-7B, and RDMM-0.5B), with a particular focus on their improvements over base models and GPT-4o-mini and GPT-4o. both baseline and GPT models were conditioned with 20-shots examples from the dataset to ensure a fair evaluation across each task. The RDMM-8B model achieves the highest accuracy, with an average of 92.98%, showcasing a significant improvement from its base model’s 44.34%. This indicates a substantial leap in capabilities, particularly in tasks like "Follow," "Meet," and "Simple." Similarly, the RDMM-7B model reaches an impressive 87.21% accuracy, surpassing both its base model’s performance (38.48%) and other comparative models, such as GPT-4o. The RDMM-0.5B model, while smaller in scale, still demonstrates a marked improvement over its base model, increasing accuracy from 1.75% to 54.44%. Although it slightly trails behind GPT-4o, which achieved 58.74%, it still outperforms GPT-4o-mini at 52.23%, indicating the model’s competitive edge despite its smaller size.

On-Device Inference Compatibility

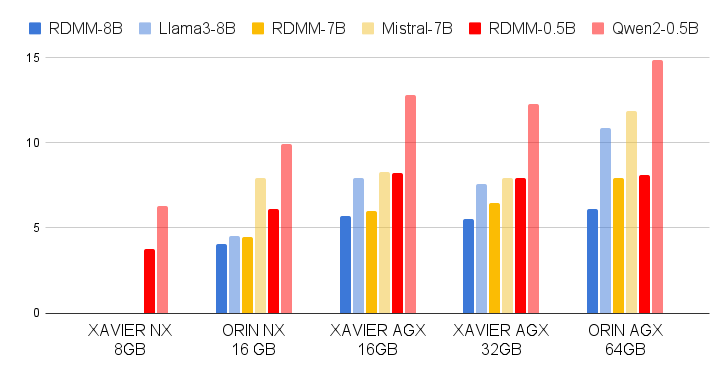

The compatibility of RDMM models for on-device inference was evaluated across various Jetson hardware platforms, including the Orin AGX 64GB, Xavier AGX 32GB, Xavier AGX 16GB, Orin NX 16GB, and Xavier NX 8GB, all of which employ ARM architecture with integrated RAM and VRAM.

RDMM On-Device Compatibility

The RDMM models—RDMM-8B, RDMM-7B, and RDMM-0.5B—were tested to ensure local inference on these devices. RDMM-8B, requiring 1.1GB RAM and 8.5GB VRAM, and RDMM-7B, requiring 1GB RAM and 6.8GB VRAM, successfully operated on most platforms. However, the Xavier NX 8GB, with limited memory, could only support the RDMM-0.5B model, which demands 0.34GB RAM and 1.9GB VRAM. The larger RDMM models exceeded the available memory on the Xavier NX 8GB, highlighting the importance of aligning model size with hardware constraints for effective on-device inference.

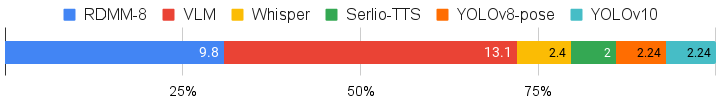

Framework On-Device Compatibility

We also evaluated the full system framework, including VLM, Whisper, Serlio-TTS, YOLOv8-pose, and YOLOv10, alongside the RDMM model. The results, illustrated in Fig., shows the memory usage ratios of each model on a local device. The entire system required 30GB of memory, making the 32GB Xavier AGX the smallest device capable of running it.

Models Inference Speed Comparison

The performance evaluation graph in Fig. compares the inference speed of RDMM models against other models on various Jetson devices, showing a slight trade-off between speed and enhanced capabilities. While RDMM models are marginally slower than their base models—such as Llama3-8B, Mistral-7B, and Qwen2-0.5B—this slowdown is mainly due to the Progressive Fine-Tuning with Layer-wise Re-calibration approach, which incorporates a QLoRA compact neural network adapter.

For example, on the ORIN AGX 64GB, the RDMM-8B model achieved 6.12 tokens per second (T/s), compared to Llama3-8B’s 10.86 T/s and Mistral-7B’s 11.87 T/s. Similarly, on the XAVIER AGX 32GB, the RDMM-8B model achieved 5.54 T/s, while Llama3-8B and Mistral-7B reached 7.56 T/s and 7.95 T/s, respectively. On smaller platforms like the XAVIER AGX 16GB and ORIN NX 16GB, RDMM models still performed competitively. For instance, on the ORIN NX 16GB, RDMM-0.5B achieved 6.12 T/s, compared to Qwen2-0.5B’s 9.90 T/s. Even on the entry-level XAVIER NX 8GB, where only RDMM-0.5B was operable, it managed 3.75 T/s, demonstrating its capability on limited hardware.

Real World Deployment

The real-world deployment of the RDMM models took place during the RoboCup@Home Competition, using Lucio, a custom-built home service robot platform. In this environment, the RDMM models were responsible for handling various household and service-oriented tasks that required not only decision-making but also a level of contextual memory retrieval. These tasks involved navigating through complex environments, following people while carrying luggage, and guiding individuals to specific locations. Lucio’s ability to understand its role was essential in tasks such as acting as a receptionist or handing items to people, where it needed to interact naturally and engage in small talk, as shown in Fig.. An example of this is guiding a person while engaging in small talk about a specific topic, highlighting how contextual memory retrieval improves interaction and enhances service quality in real-world situations.

Conclusion

This research introduces RDMM models to address challenges in domain-specific robotic tasks, enhancing planning, interaction, and execution through contextual memory retrieval. By incorporating agent-specific knowledge and past experiences, RDMM enables real-time, on-device inference with high accuracy, operating on edge devices with as little as 8GB of memory. This reduces reliance on cloud-based systems, improving affordability, privacy, security, and reliability for real-world deployment.

We also present a comprehensive dataset consisting of 27,000 planning instances and 1,300 annotated text-image samples, which serves as a valuable benchmark for future research in robotic decision-making with embedded knowledge representation. Looking ahead, we aim to expand RDMM’s capabilities to multi-agent environments, explore lifelong learning approaches, and assess its effectiveness in broader real-world applications beyond RoboCup@Home.

References

*This project was funded by Police-Lab 2.0 Program(www.kipot.or.kr) funded by the Ministry of Science and ICT(MSIT, Korea) & Korean National Police Agency(KNPA, Korea) (No. 082021D48000000) and Korea Institute for Advancement of Technology(KIAT) grant funded by the Korea Government(MOTIE)(P0008473, HRD Program for Industrial Innovation)↩︎

Authors are with Faculty of Electrical Engineering, Pusan National University, Busan, South Korea

seungjoon.yi@pusan.ac.kr↩︎